TeamShifts: Designing an AI experience from magical to truly usable

AI Product Design + UX Research

SOME CONTEXT

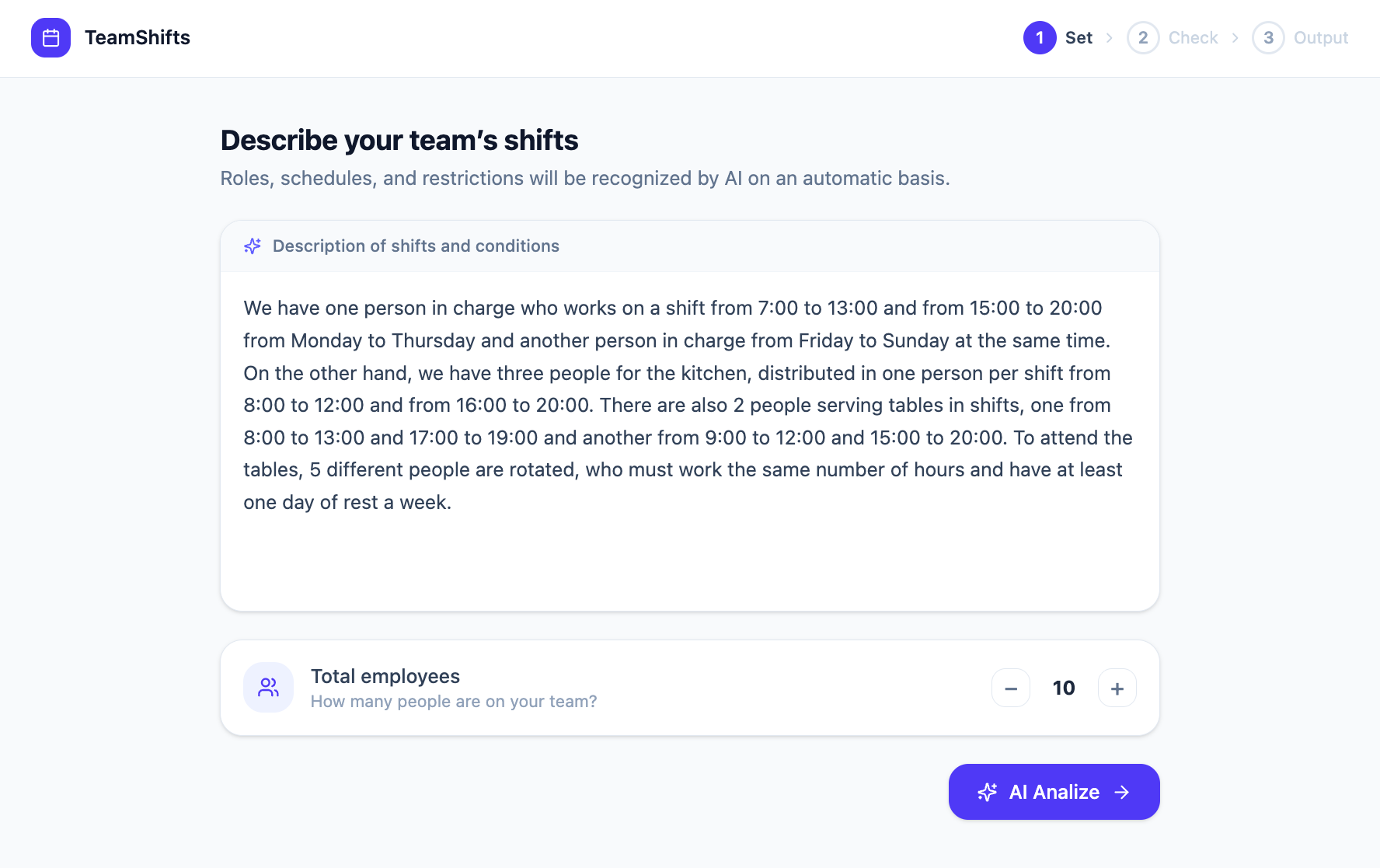

The initial idea felt almost magical: type a simple prompt and instantly generate perfect employee schedules. In theory, AI could remove hours of manual planning and transform a highly operational process into a natural conversation. In reality, the challenge was far more complex. This project became an exploration of how to design AI-powered products that balance automation, structure, and trust.

CHALLENGE

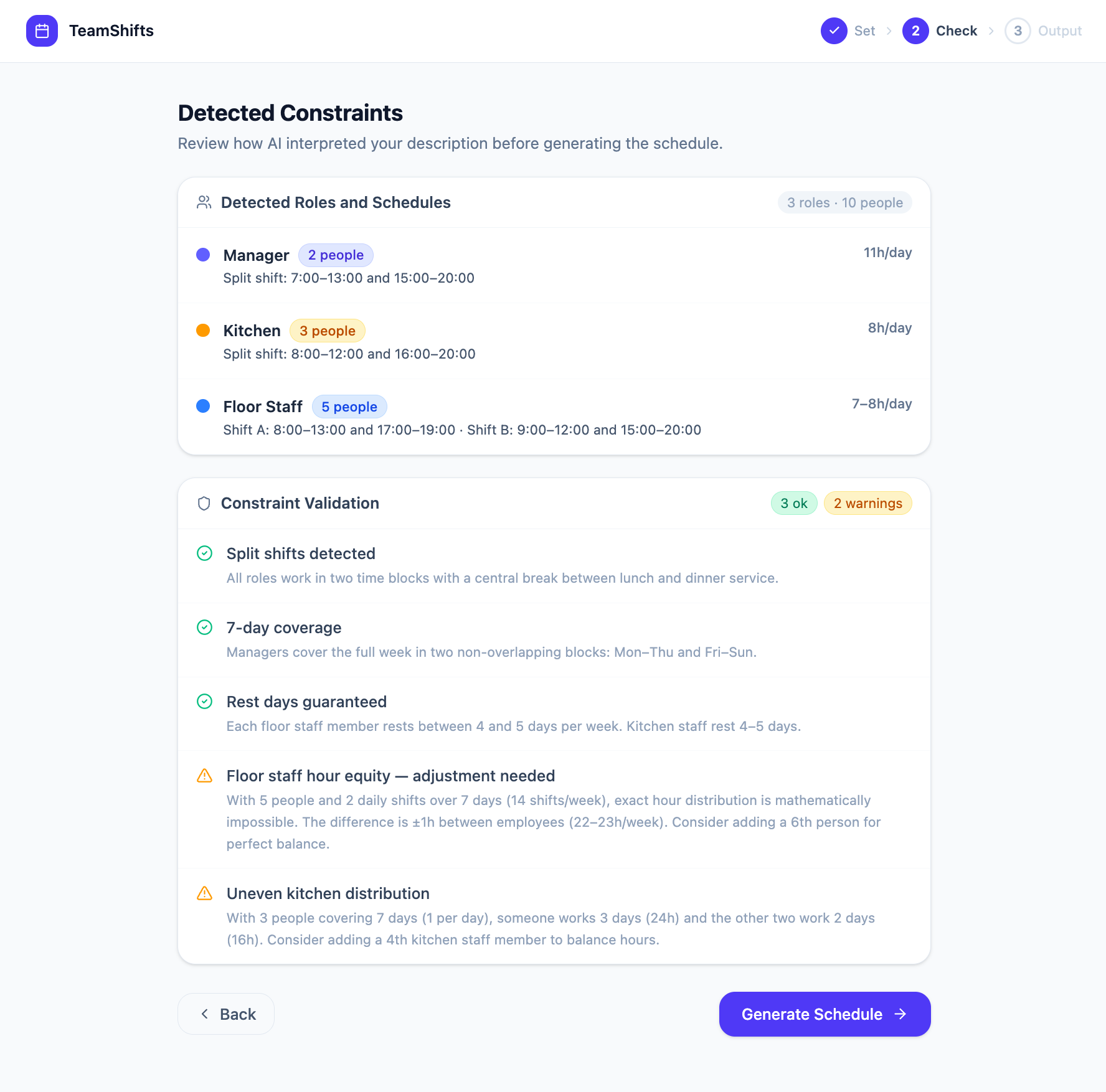

Shift planning is not just about assigning employees to dates. Every generated schedule needs to balance availability, legal constraints, staffing coverage, fairness, and individual preferences, while still remaining operationally viable. What initially looked like a technical problem quickly revealed itself as a design problem. The difficulty was not generating schedules, but generating schedules users could trust.

EARLY TESTING

The first usability tests exposed a critical issue. While the AI could generate schedules, results were often inconsistent or incomplete. Users repeatedly rewrote prompts trying to “guess” the correct phrasing. Many were unsure why the system failed or how to improve the output. The issue was not the AI itself, but the amount of ambiguity users had to resolve on their own.

FIRST ITERATION

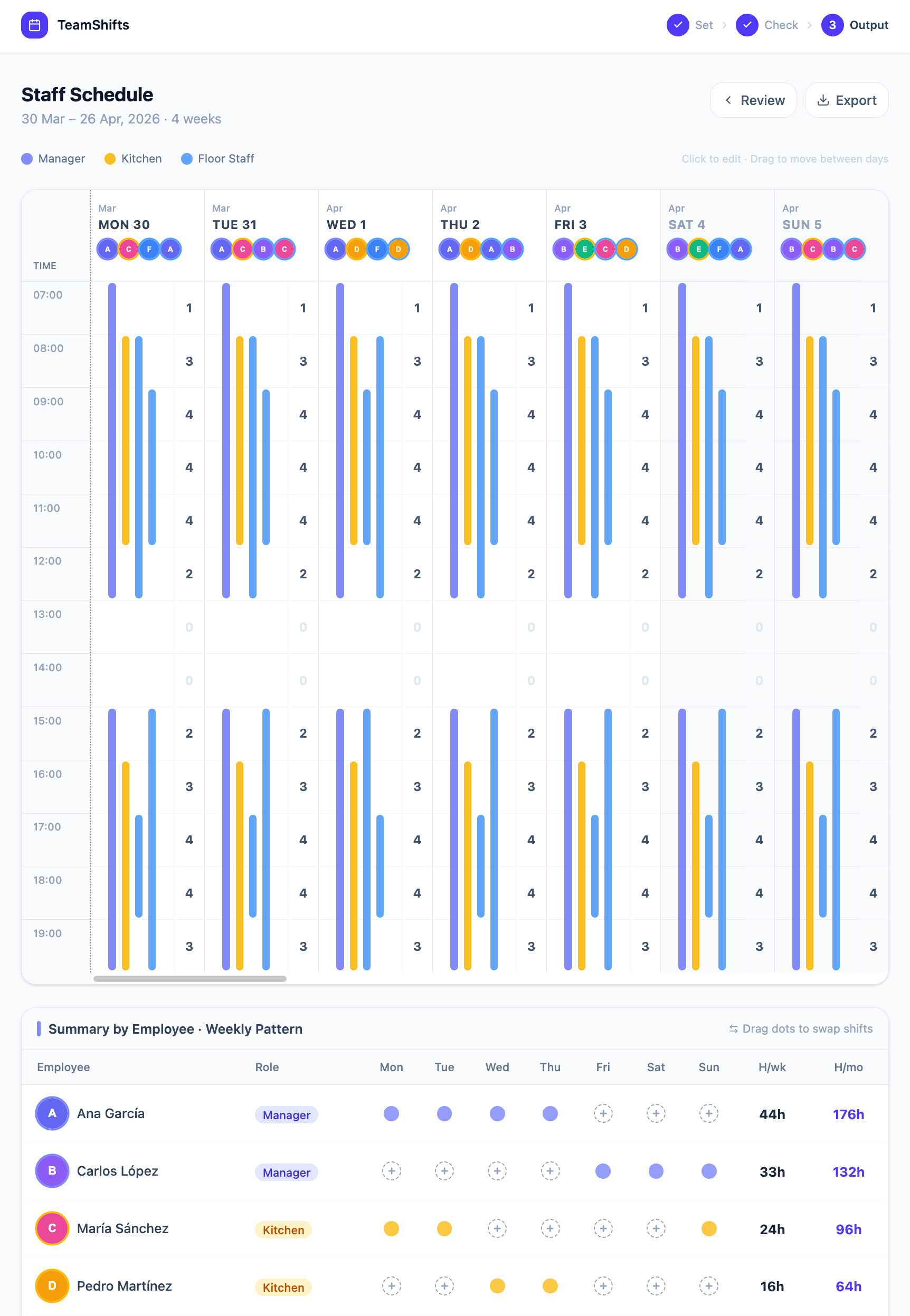

The advanced flow transformed workforce planning into a sequence of focused steps. Users could define the scheduling period, import employees, configure staffing rules, and specify preferences before generating the final schedule. This changed the role of AI completely. Instead of interpreting vague instructions, the system received structured inputs that dramatically improved output quality and predictability.

At this point he final product became a hybrid system where AI handled complexity while the interface provided clarity and control. The prompt remained part of the experience, but as an entry point rather than the primary workflow.

RESEARCH

We tested both approaches with 24 participants including HR managers, operations teams, and scheduling coordinators. Initial behavior strongly favored the AI-first experience:

%

started with the prompt interface

Eighty-five percent of users who initially chose the prompt eventually switched to the guided flow. Most described the prompt as attractive and fast, but unreliable for completing real scheduling tasks. Meanwhile, users who started with the advanced flow rarely abandoned it, largely because it gave them a stronger sense of control and confidence.

%

of users who started with the prompt tried again using the guided flow

Prompt-only generation achieved a 38% success rate on the first attempt, while the structured advanced flow reached 87%. Confidence scores followed the same pattern. Users preferred spending a bit more time configuring the system if the outcome felt predictable and reliable.

DISCOVERY VS. DELIVERY

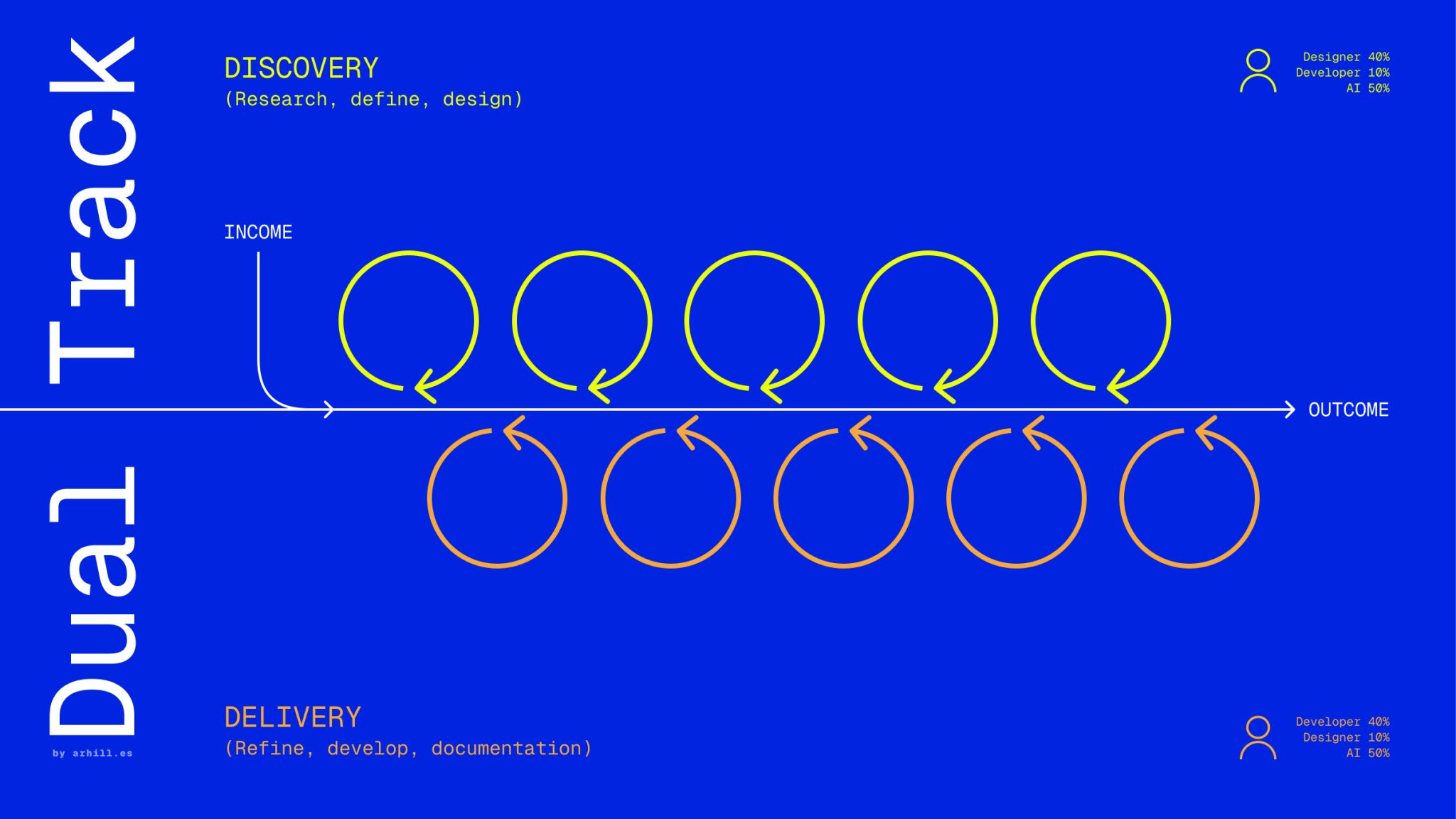

By combining AI prototyping tools with a Dual-Track approach instead of a traditional Scrum-only process, we rapidly built an MVP to validate our core hypothesis with real users. This allowed us to test, learn, and iterate continuously within days rather than weeks, turning AI-driven speed into faster product learning and a more effective final solution.

CONCLUSIONS

This project fundamentally changed the way I think about AI product design. The best AI experiences are not the ones that remove interfaces completely. They are the ones that carefully balance flexibility, guidance, and trust in situations where the outcome truly matters.